X_train = np.concatenate((X_train, X_val1), axis=0) _weights(self.weights_path,overwrite=True) History = _generator(datagen.flow(X_train, Y_train, batch_size=self.batch_size), # fit the model on the batches generated by datagen.flow() Height_shift_range=height_shift_range)# randomly shift images vertically (fraction of total height)) Width_shift_range=width_shift_range, # randomly shift images horizontally (fraction of total width) # this will do preprocessing and realtime data augmentationĭatagen = ImageDataGenerator(rotation_range=rotation_range, # randomly rotate images in the range (degrees, 0 to 180) Y_test = np_utils.to_categorical(y_test, self.nb_classes) Y_train = np_utils.to_categorical(y_train, self.nb_classes) # convert class vectors to binary class matrices X_test = X_test.reshape(X_test.shape, 1, self.img_rows, self.img_cols) X_train = X_train.reshape(X_train.shape, 1, self.img_rows, self.img_cols) X_train, X_test, y_train, y_test = train_test_split(ĭata, labels, test_size=0.33, random_state=42) # Get the label from the image path and then get the index from the letters list Img = cv2.imread(self.letters_folder+"/"+imgName, cv2.IMREAD_GRAYSCALE) """ Trains the model using the dataset in letters_folder """įor imgName in listdir(self.letters_folder): Model.evaluate_generator(img_gen.flow(x_train, y_train, batch_size=16))ĭef train(self,save_model_to_file = True,rotation_range = 20,width_shift_range=0.5,height_shift_range=0.2): Validation_data=img_gen.flow(x_test, y_test,Īssert history.history > 0.75 History = model.fit_generator(img_gen.flow(x_train, y_train, batch_size=16), Layers.Dense(y_test.shape, activation='softmax') Layers.Conv2D(filters=4, kernel_size=(3, 3), (x_train, y_train), (x_test, y_test) = get_test_data(num_train=500, Img_gen = ImageDataGenerator(rescale=1.) # Dummy ImageDataGenerator

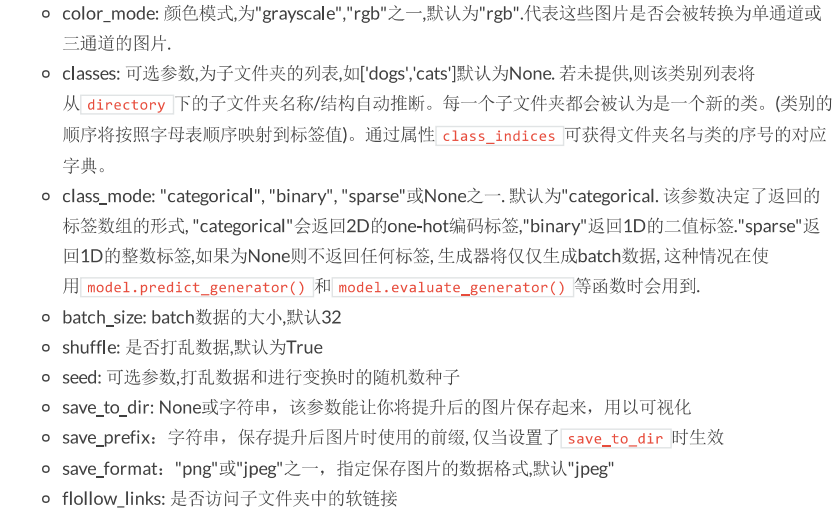

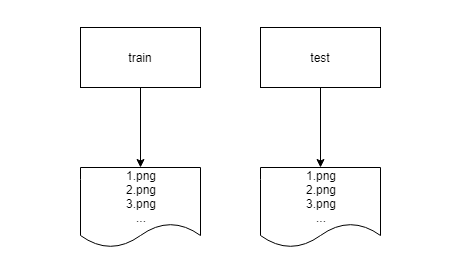

Train_data = datagen.flow_from_directory('.Def test_image_data_generator_training(): Going back to the MNIST problem above, if you only cared about recognizing digits 0 through 3, you could do something like this: In fact, you can also use this trick to exclude subdirectories of your dataset for whatever reason. Now test_data will contain only the one test “class”, allowing you to work with your test dataset just as you would the labeled training data from above. The classes argument tells flow_from_directory that you only want to load images of the specified class(es), in this case the test “class”. Test_data = datagen.flow_from_directory('.', classes=) There is a workaround to this however, as you can specify the parent directory of the test directory and specify that you only want to load the test “class”: Without classes it can’t load your images, as you see in the log output above. It can’t find any classes because test has no subdirectories. Train_data = datagen.flow_from_directory('./test') Inside of test is simply a variety of images of unknown class, and you can’t use the flow_from_directory function like we did above as you’ll end up with the following issue: You’ll then see output like so, indicating the number of images and classes discovered:įound 1000 images belonging to 10 classes.įrequently however, you’ll also have a test directory which doesn’t have subdirectories as you don’t know the classes of those images. Train_data = datagen.flow_from_directory('./train') Loading this dataset with ImageDataGenerator could be accomplished like so: In this case, each subdirectory of the train directory contains images corresponding to that particular class (ie. For instance, you might use a directory structure like so for the MNIST dataset: The flow_from_directory function is particularly helpful as it allows you to load batches of images from a labeled directory structure, where the name of each subdirectory is the class name for a classification task.

I’ve recently written about using it for training/validation splitting of images, and it’s also helpful for data augmentation by applying random permutations to your image dataset in an effort to reduce overfitting and improve the generalized performance of your models. The ImageDataGenerator class in Keras is a really valuable tool. Related Posts Training/Validation Split with ImageDataGenerator in Keras Using a Convolutional Neural Network to Play Conway's Game of Life with Keras Transfer Learning and Retraining Inception/MobileNet with TensorFlow and Docker

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed